A sandbox platform built from the ground up for AI agent workloads

Every call gets a fresh sandbox in under 20ms, torn down when execution ends. State is saved as a filesystem diff between calls. Credentials injected and wiped on teardown. Or skip our cloud and run it all in your VPC with one Helm chart. Your infra, your rules.

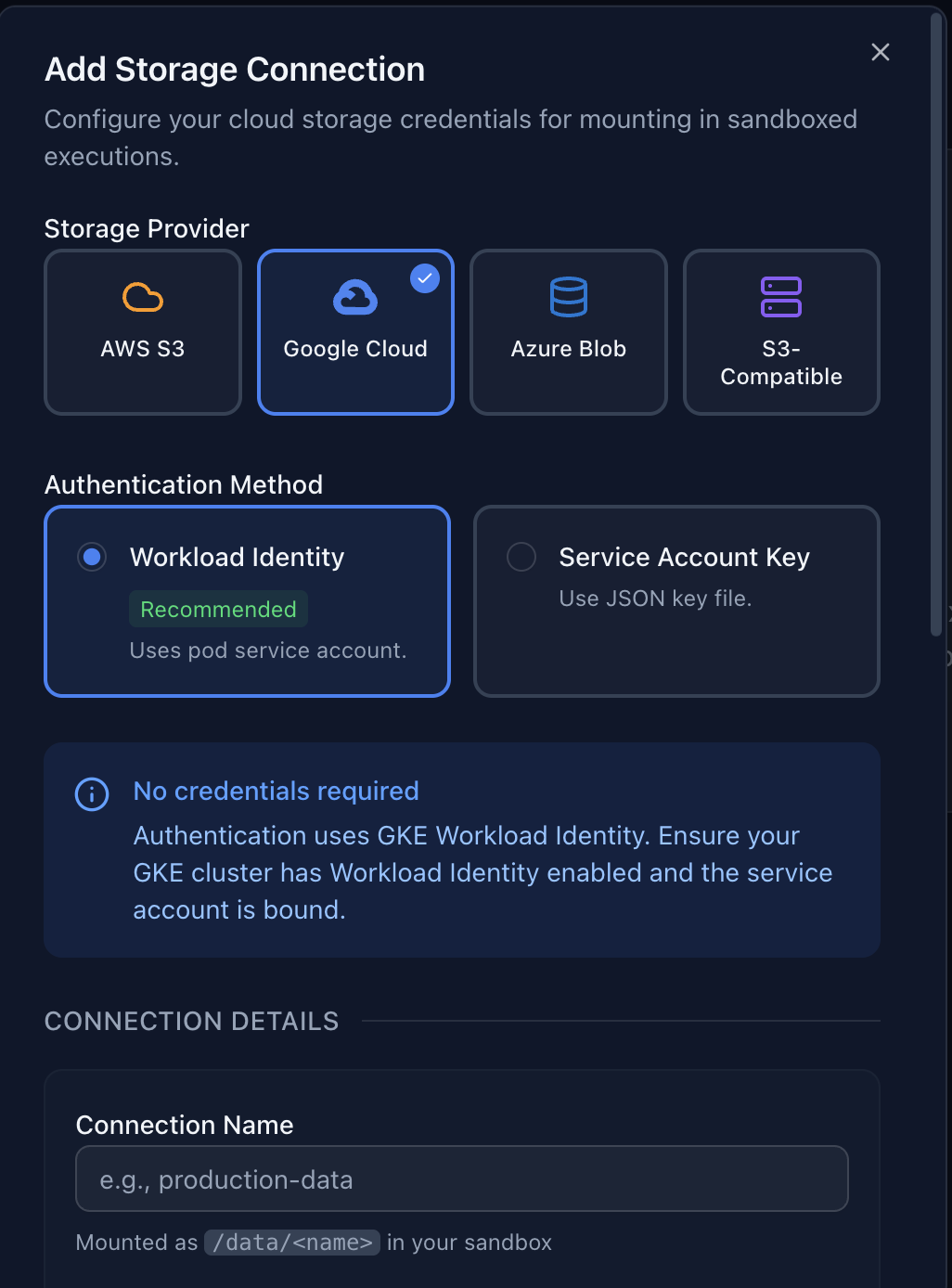

Your data stays in your bucket. Zero copies, zero egress, zero compromise.

Other sandbox providers require uploading data to their cloud, creating copies, sync overhead, egress costs, and data residency concerns. Baponi mounts your existing bucket directly. Your data never moves.

How it works

Your bucket mounts as /data

inside the sandbox. Agent code reads and writes files normally without knowing it's cloud storage. Bucket credentials live in a separate process with kernel-enforced isolation. No sandbox code can reach them.

users/user-123/

Enterprise: When deployed self-hosted in your VPC, BYOB data never leaves your infrastructure. No data processing agreement needed for the execution layer. Your compliance posture covers the deployment.

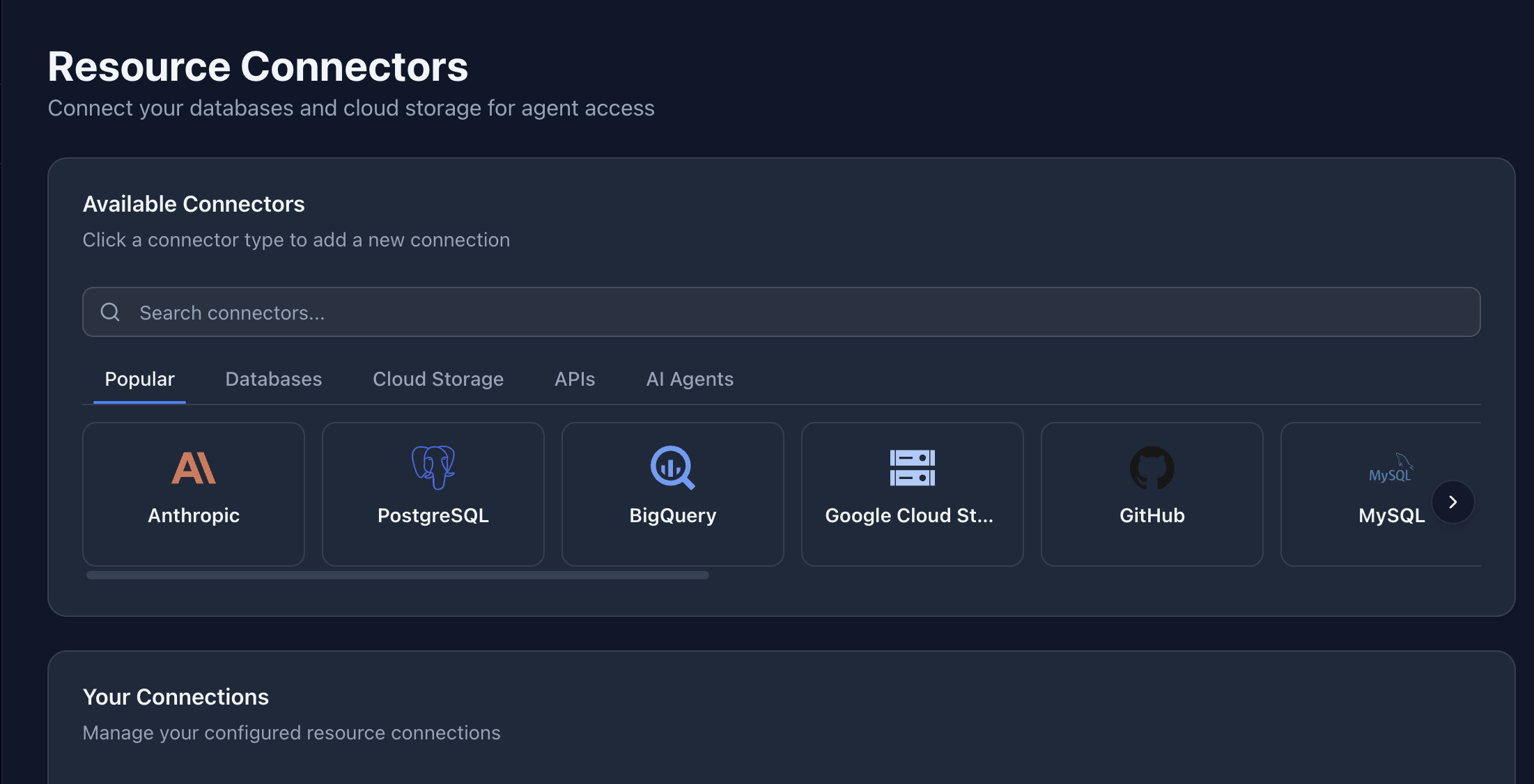

A growing ecosystem of connectors. Centralized credential management for every AI agent.

AI agents need database, API, and storage access to be useful. But managing credentials per agent, per environment, per API key is an operational nightmare. Baponi centralizes credential management in the admin UI. Configure once, control per API key, audit everything. Your AI agent's code just works with standard CLIs and SDKs.

Service account credentials

SimpleUpload a service account key or connection string. Baponi injects it as native config files at runtime - .pgpass, .my.cnf, application default credentials, .gitconfig. Your AI agent's code runs psql, git, or bq directly without any credential setup. Managed centrally, controlled per API key, fully audited.

Workload Identity Federation

Zero secretsConnect via GCP Workload Identity or AWS IAM Roles. No raw credentials stored anywhere. The sandbox receives short-lived tokens through identity federation. Nothing to rotate, nothing to leak.

LLMs have seen millions of examples of psql, git, and bq

in their training data. No custom MCP tools needed. Baponi makes the tools your AI already knows actually work.

Enterprise: With self-hosted deployment, credentials never leave your VPC. Use Workload Identity Federation to eliminate stored secrets entirely. Your secrets manager, your policies, your infrastructure.

Never pay for idle compute. Every sandbox spins up in Sub-20ms and tears down after each call.

Other platforms keep containers running between calls and bill for idle time. Baponi's sub-20ms startup enables a per-call model: spin up, execute, snapshot, tear down. Resume the same session days later. Your agents never manage container lifecycle.

How the per-call lifecycle works

Baponi creates an isolated sandbox in under 20ms. No pre-warmed pool, no idle containers waiting.

Your agent's code runs with full access to mounted storage, injected credentials, and installed packages.

Execution finishes. The sandbox state is snapshotted and the sandbox is destroyed. Billing stops.

Next call with the same thread_id restores the snapshot. Hours, days, or weeks later. The agent never manages container lifecycle.

What this means for your bill and your code

- Zero idle costs. You're billed per execution, not per second of compute. No sandbox runs when no code is running. Other platforms charge for containers sitting idle between agent calls.

- No lifecycle management. Your agent code doesn't start, stop, poll, or clean up containers. Just call the API. Baponi handles the rest.

- Stateful when you need it. Pass a thread_id to persist state across calls. Installed packages, environment variables, and filesystem changes survive between executions, even across days or weeks.

- Scale without provisioning. No capacity planning, no auto-scaling rules, no container pool tuning. Every sandbox is created on demand and destroyed when done.

The speed behind this model: Sub-20ms is the cold start. Every call, not an average. This is what makes per-call teardown economically viable instead of keeping containers running.

Multi-layer isolation built for running untrusted LLM-generated code.

Baponi's sandboxing technology was built by security engineers from the ground up for one purpose: safely executing arbitrary, LLM-generated code from untrusted sources. Not retrofitted from general-purpose compute.

Multi-layer sandboxing

Proprietary isolation technology written in Rust. Multiple independent security boundaries. A vulnerability in one layer doesn't compromise the sandbox.

BYOB credential isolation

BYOB storage credentials are held in a separate process with kernel-enforced isolation, never inside the sandbox. Code reads and writes /data as a local directory. No mechanism exists for sandbox code to access the underlying storage credentials.

Immutable audit trail

Full audit log on every execution, every API call, every admin action. SOC 2 compliant. Unlimited retention on Enterprise. Included on every plan, even Free.

$10,000 bug bounty

We put money behind our security claims. If a researcher finds a sandbox escape or credential leak, we pay up to $10,000. Confidence you can verify.

Enterprise: zero-trust by architecture. When Baponi runs in your VPC, there is no trust to extend. Your data, your code, and your credentials stay inside your infrastructure. No vendor data processing agreement needed for the execution layer. Your existing compliance controls cover the deployment.

Import your OCI image. Your AI agents work with your stack, not a generic sandbox.

Generic sandbox environments lack your company's packages, internal tools, and configurations. Baponi lets you import any OCI container image pre-loaded with exactly what your agents need.

- Company-specific Python packages and internal libraries

- Pre-configured CLI tools and development environments

- Data science stacks with ML frameworks and model files

- Compliance-hardened base images with your security policies

Free: 1 custom image. Pro & Enterprise: unlimited.

# Your Dockerfile

FROM python:3.12-slim

# Your company's packages

RUN pip install pandas numpy scikit-learn \

your-internal-ml-library \

company-data-connectors

# Your tools and configs

COPY .pylintrc /etc/pylintrc

COPY internal-certs/ /usr/local/share/ca-certificates/

# Your AI agents get all of this out of the boxMCP, REST API, or Python SDK. First execution in under 60 seconds.

Three integration paths. Pick the one that fits your stack. Every path gets you to running code in a single API call.

MCP Protocol

Streamable HTTP transport. Works with Claude Desktop, Cursor, Windsurf, and any MCP-compatible client. Add the server URL and you're done.

MCP reference →REST API

Direct HTTP. Language-agnostic. Standard Bearer token auth. One POST to execute code, one response with stdout, stderr, and exit code.

API reference →Python SDK

pip install baponi

- sync and async clients, retry logic, typed responses. Framework integrations for 5 major AI platforms.

Framework integrations

Each integration provides a ready-to-use code_sandbox tool that works with the framework's native tool system.

3 languages

Bash, Python, and Node.js. Your AI agent picks the right language for each task.

Full internet access from day one

Unrestricted outbound access on every plan, including Free. No whitelist, no approval process, no paid-tier upgrade. Your AI agents can call APIs, install packages, and access any service.

Full platform capabilities at a glance

Every plan includes BYOB storage, audit logging, MCP + REST, and RBAC. Upgrade for more compute power, longer runs, and enterprise deployment.

| Free | Pro | Enterprise | |

|---|---|---|---|

| Execution | |||

| Max CPU per sandbox | 1 | 4 | Unlimited |

| Max RAM per sandbox | 1 GiB | 4 GiB | Unlimited |

| Max execution time | 60s | 1 hour | Unlimited |

| Concurrent executions | 5 | 100 | Unlimited |

| Storage & Data | |||

| Custom OCI images | Default + 1 custom | Default + unlimited | Default + unlimited |

| Security & Compliance | |||

| Audit log retention | 1 day | 30 days | Unlimited |

| API keys | 10 | Unlimited | Unlimited |

| SSO / OIDC | - | - | Included |

| Self-hosted / VPC deployment | - | - | Included |

Pricing and specs current as of April 2026. See pricing for details.

Feature questions

What happens to data and credentials after execution ends?

The sandbox is destroyed when execution ends. Files written to BYOB storage persist in your bucket. Files in the sandbox's temporary filesystem are destroyed. BYOB storage credentials are held in a separate mount process and never enter the sandbox. Connector credentials injected as config files are wiped with the sandbox. There is no state leakage between executions unless you explicitly use a thread_id for persistence.

Can sandbox code access the internet?

Yes. Full, unrestricted outbound internet access is available from day one on every plan, including Free. No whitelist, no approval process, no paid-tier upgrade required. Your AI agents can call APIs, download packages, and access any service.

How does BYOB storage differ from managed storage?

BYOB (Bring Your Own Bucket) mounts your existing S3, GCS, or Azure Blob bucket as a local directory inside the sandbox. Data never leaves your cloud account. No copies, no sync. Managed storage is 10 GB of Baponi-hosted persistent storage for teams that don't need to bring their own bucket. Both options are available on every plan.

Can I install custom packages not in the base image?

Yes, two ways. For quick installs, your AI agent can run pip install or npm install during execution. Packages persist within a thread_id session. For production workloads, import a custom OCI container image pre-loaded with your company's packages, internal tools, and configurations. Free tier includes 1 custom image, Pro and Enterprise get unlimited.

Does BYOB storage support random writes and standard filesystem operations?

Yes. Baponi's BYOB mount supports random reads, random writes, seeks, appends, and directory listings. Standard libraries like pandas and CLI tools that expect a local filesystem work without modification. Unlike some similar solutions that only support sequential writes and simple read operations, Baponi handles the full range of file I/O that real-world code expects.

How does Baponi compare to VM-based sandbox providers?

Baponi uses proprietary multi-layer sandboxing written in Rust by security engineers, purpose-built for AI agent workloads. This gives us sub-20ms overhead on every execution compared to 100-500ms for VM-based alternatives, without sacrificing isolation. The architecture was designed from the ground up for running LLM-generated untrusted code, not adapted from general-purpose compute infrastructure.

What does the enterprise self-hosted deployment include?

The entire Baponi platform deploys in your Kubernetes cluster via a single Helm chart. Your data, your code, and your credentials never leave your VPC. You bring your own identity provider (Okta, Azure AD, any OIDC), your own PostgreSQL, and your own cloud storage. Baponi's security engineers work directly with your DevOps team for deployment and ongoing support.

Is audit logging really free on all plans?

Yes. Full SOC 2 compliant audit logging is included on every plan, including Free. Every execution, every API call, every admin action is logged and immutable. Retention varies by plan: 1 day on Free, 30 days on Pro, Unlimited on Enterprise. Most competitors gate audit logging behind enterprise pricing.

Which AI frameworks are supported?

Baponi provides native Python SDK integrations for Anthropic Claude, OpenAI Agents, Google Gemini, LangChain, and CrewAI. Install with pip install baponi[framework]. Each integration provides a ready-to-use code_sandbox tool that works with the framework's native tool system. MCP support works with Claude Desktop, Cursor, Windsurf, and any MCP-compatible client. The REST API works from any language.

Start building for free

1,000 free credits/month. Every feature included. No credit card required.